A customer success team shipped a playbook last quarter. Any account with a drop in NPS or a support ticket about pricing got a proactive call from a CSM within 48 hours. The executive deck sold it on a regression: customers who took a call were 31% more likely to renew.

Six months later, retention was flat. The cost of the program was real. The lift was not. The CSMs were exhausted calling accounts that would have renewed anyway, and the truly at-risk accounts churned on schedule.

This is the correlation fallacy, dressed up in a dashboard. "Customers who call support churn less" is the textbook example, and CX teams act on it every week. To know why a customer stayed, you need causal inference: causal graphs, propensity matching, do-calculus, uplift modeling. Most teams skip this because sklearn made them lazy. A LogisticRegression().fit() looks identical whether the data was randomized or not, and nothing in your notebook will warn you.

What follows is a hands-on walk through the pipeline for engineers who have scikit-learn muscle memory but have never opened DoWhy. You'll build a working causal analysis step by step and end with a retention model that survives contact with reality.

Prerequisites

You need Python 3.11+ with pandas, scikit-learn, dowhy, and econml. Install them with:

python -m venv .venv && source .venv/bin/activate

pip install pandas scikit-learn numpy

pip install dowhy econmlFor the TypeScript wiring at the end you need Node 20+ and the @chanl/sdk package. No prior causal inference knowledge is assumed. If you can fit a logistic regression with LogisticRegression().fit(X, y), you have enough background.

You should also have a dataset of customers with at least four columns: a pre-period feature (like NPS score), a treatment flag (did a CSM call?), a post-period outcome (did they renew?), and any covariates you can get your hands on. We will simulate one so the code is runnable as-is.

The $4M retention playbook that moved nothing

A story first, because the math will land harder with it.

A B2B software company ran the following for two quarters: any workspace with an NPS drop below 7 got flagged for a proactive CSM outreach. The CSMs logged roughly 4,000 calls. Net new retention program cost: about $4M in labor plus tooling.

The "impact" deck showed this headline regression.

import numpy as np

import pandas as pd

from sklearn.linear_model import LogisticRegression

rng = np.random.default_rng(42)

n = 20_000

# "motivation" is unobserved in the real world. We'll use it to generate

# both treatment (a CSM called) and outcome (customer renewed).

motivation = rng.normal(size=n)

# The CSM team prioritized motivated, engaged accounts (who answer the phone).

pr_call = 1 / (1 + np.exp(-(0.2 + 1.1 * motivation)))

call = (rng.uniform(size=n) < pr_call).astype(int)

# Motivated accounts also renew more, regardless of the call.

# The TRUE causal effect of a call is a modest +4 pp.

pr_renew = 1 / (1 + np.exp(-(-0.2 + 1.3 * motivation + 0.18 * call)))

renew = (rng.uniform(size=n) < pr_renew).astype(int)

df = pd.DataFrame({"call": call, "renew": renew, "motivation_proxy": motivation})

# The naive analysis a dashboard would show.

naive = df.groupby("call")["renew"].mean()

lift = naive[1] - naive[0]

print(f"Naive difference in renewal: {lift * 100:.1f} percentage points")Run that and you get something close to a 30 percentage point "lift" from being called. That is the number the deck showed. The CSMs got a raise. The company scaled the program. The renewal rate did not move, because the number is not what it looks like.

Correlation you measured. Causation you wanted.

The regression measured something true: among customers who took a call, renewal was higher. But the CSMs didn't assign calls randomly. They prioritized accounts that picked up the phone and engaged, which are exactly the accounts that renew whether you call them or not. The call didn't cause the renewal. The willingness to pick up the phone caused both.

This is confounding. A confounder is a variable that drives both the treatment (taking a call) and the outcome (renewing). Motivation plays that role in the simulation above. When a confounder is present and you don't control for it, your regression coefficient is a mongrel: partly the real effect, partly the confounder leaking through. You can't tell which is which from the coefficient alone.

Judea Pearl's The Book of Why turns on this one idea. Two variables can move together without one causing the other, and the only way to untangle them is to draw the causal structure and argue with it on paper [1]. That's what the rest of this article does.

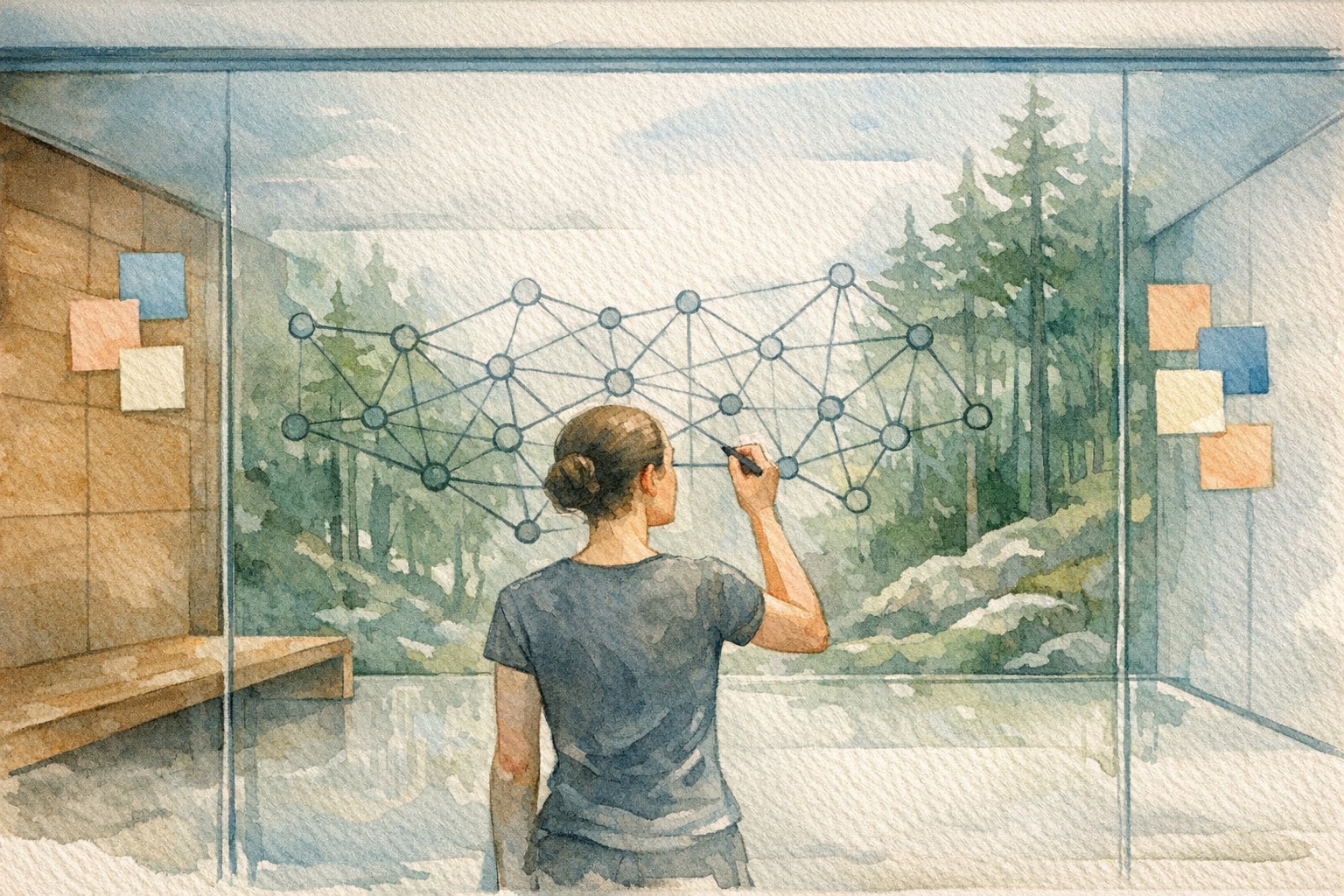

Drawing the DAG (the 2-minute habit that saves quarters)

Before any code, draw the graph on a whiteboard or a napkin. A causal graph (also called a DAG, for directed acyclic graph) is a picture of what causes what. Variables are nodes. Arrows go from cause to effect. That's it. This habit is the single largest gap between engineers who trust their numbers and engineers who get surprised by them.

For the CSM outreach program, the graph looks like this.

That orange arrow we care about is C to R. That is the causal effect we want: what would renewal be if we intervened and called this customer? Everything else is noise we have to block.

Look at the backdoor path C <- M -> R. That path carries association from C to R that has nothing to do with whether the call worked. To measure the real effect, we have to block that path by conditioning on M (or a good proxy for it). This is Pearl's backdoor criterion [2]: find a set of variables that blocks every backdoor path without being a descendant of the treatment, condition on them, and the remaining association is causal.

The practical takeaway: your regression needs to include motivation, or something that captures it, before its coefficient on call means anything. In the real world you never observe motivation directly. What you can do is build a propensity model that approximates it.

Propensity score matching by hand in Python

Propensity score matching (PSM) is the entry-level causal tool, and the one most teams reach for first. The idea fits in two sentences. For every customer you called, find the most statistically similar customer you didn't call, then compare their outcomes. The matched pair approximates what a randomized experiment would have told you.

The "propensity score" is the probability of being treated given the covariates, usually estimated with plain logistic regression. Customers with the same propensity score are, by construction, comparable on everything the model saw [3]. The word "saw" is doing work in that sentence, and we'll come back to it.

Let's extend the previous script with a from-scratch PSM. We'll train the propensity model, match every treated customer to its nearest untreated neighbor on the score, and recompute the lift.

import numpy as np

import pandas as pd

from sklearn.linear_model import LogisticRegression

from sklearn.neighbors import NearestNeighbors

# Use the df from naive_analysis.py (call, renew, motivation_proxy).

# Step 1: estimate propensity to be called, given everything that drives it.

X = df[["motivation_proxy"]].to_numpy()

propensity_model = LogisticRegression().fit(X, df["call"].to_numpy())

df["propensity"] = propensity_model.predict_proba(X)[:, 1]

# Step 2: split treated and control, then nearest-neighbor match on propensity.

treated = df[df["call"] == 1].reset_index(drop=True)

control = df[df["call"] == 0].reset_index(drop=True)

nn = NearestNeighbors(n_neighbors=1).fit(control[["propensity"]].to_numpy())

distances, idx = nn.kneighbors(treated[["propensity"]].to_numpy())

matched_controls = control.iloc[idx.flatten()].reset_index(drop=True)

# Step 3: compare outcomes in the matched sample.

att = (treated["renew"].mean() - matched_controls["renew"].mean())

print(f"Matched ATT (average treatment effect on the treated): {att * 100:.1f} pp")Run this and the "lift" collapses. Where the naive analysis showed roughly 30 percentage points, the matched ATT lands near 4 percentage points, which is what we simulated as the true effect. The confounding inflated the answer by roughly 7x.

That is the anatomy of a false playbook. The program was not useless, but the headline was 7x larger than reality, and the difference is exactly the budget you wasted calling customers who were already going to renew.

A caveat worth naming: matching only controls for confounders you include in the propensity model. If motivation leaks out through a variable you didn't include (for example, product usage depth), your matched estimate is still biased. PSM does not rescue you from missing data. It just formalizes the comparison you thought you were making.

DoWhy: the four-step loop you can run tonight

Matching by hand is fine for a one-off. When you run this analysis every month, or when you need to defend it to someone who will look for mistakes, you want structure. Microsoft's DoWhy library wraps causal inference in a four-step loop: model, identify, estimate, refute [4][5].

The idea is that you write the assumptions as code, DoWhy identifies a formal estimand from them, you estimate it, and then you refute the estimate with sensitivity checks. If the refutations fail, your estimate is fragile and the dashboard shouldn't ship it.

Here is the same analysis, reframed through DoWhy.

from dowhy import CausalModel

# Step 1: model. Declare the graph as you drew it.

model = CausalModel(

data=df,

treatment="call",

outcome="renew",

common_causes=["motivation_proxy"],

)

# Step 2: identify. Let DoWhy derive the estimand from your graph.

estimand = model.identify_effect(proceed_when_unidentifiable=False)

print(estimand)

# Step 3: estimate. Pick a method. Propensity score matching again,

# but under a principled interface.

estimate = model.estimate_effect(

estimand,

method_name="backdoor.propensity_score_matching",

target_units="att",

)

print(f"DoWhy ATT estimate: {estimate.value * 100:.1f} pp")The estimand printout is worth reading. DoWhy tells you exactly which backdoor set it is conditioning on and which assumptions make the estimand identifiable. This is the paper trail a regression output doesn't give you.

Now the important part. DoWhy will let you pretend to find a causal effect in data that doesn't contain one. So you test your estimate with refutations.

# Refutation 1: add a random common cause.

# A real causal effect should barely change when you add noise.

refute_random = model.refute_estimate(

estimand, estimate, method_name="random_common_cause"

)

print(refute_random)

# Refutation 2: placebo treatment.

# Swap the real treatment for a random one. The effect should go to zero.

refute_placebo = model.refute_estimate(

estimand, estimate, method_name="placebo_treatment_refuter", num_simulations=50

)

print(refute_placebo)

# Refutation 3: unobserved confounder sensitivity.

# Imagine a hidden variable of a certain strength. How much does it move the estimate?

refute_unobserved = model.refute_estimate(

estimand,

estimate,

method_name="add_unobserved_common_cause",

confounders_effect_on_treatment="binary_flip",

confounders_effect_on_outcome="linear",

effect_strength_on_treatment=0.1,

effect_strength_on_outcome=0.05,

)

print(refute_unobserved)Each refutation is a gut check with a different threat model. random_common_cause says: if my model is right, adding a random variable shouldn't change anything. placebo_treatment_refuter says: if I replace the real treatment with a fake one, I should get zero effect. add_unobserved_common_cause says: if there is a hidden confounder with this strength, how much does my answer move?

If your estimate survives these, it is at minimum internally consistent. If it doesn't, you know where the load-bearing assumption is. This is the discipline that separates "we ran DoWhy" from "we ran causal inference."

Remember that regression the CSM team presented? Here is the same retention program, compared across each step of the causal pipeline.

| Method | Estimated lift | What it answers | What it misses |

|---|---|---|---|

| Naive regression | ~30 pp | "Do callers renew more?" | Ignores confounders; conflates motivation with the call |

| Propensity score matching | ~4 pp | "What's the effect on similar customers?" | Only controls for confounders you measured |

| DoWhy (do-calculus) | ~4 pp | "What's the causal effect, with refutations?" | Still assumes the DAG is right |

| Uplift (causal forest, top 30%) | ~11 pp | "Who should we actually call?" | Needs thousands of samples for stable CATE |

The naive analysis said 30 percentage points. Propensity matching and DoWhy's principled estimate both said about 4. Uplift modeling (up next) says that if you target the top 30% most persuadable segment, you can get roughly 11. That last number is what you were hoping for all along.

From 'did it work?' to 'who should we treat?' with EconML

DoWhy answers "did this cause that." The program needed a different question: given finite CSM hours, which customers should we call? Some customers will renew whether you call or not. Some will churn whether you call or not. The persuadables (customers whose renewal probability actually moves when you intervene) are the ones worth your budget.

This is the uplift modeling problem. Uber's CausalML and Microsoft's EconML are the two well-worn libraries here. EconML's CausalForestDML gives you a doubly robust estimate of heterogeneous treatment effects: per-customer "how much does calling this customer change their renewal probability" [6][7].

import numpy as np

import pandas as pd

from econml.dml import CausalForestDML

from sklearn.ensemble import GradientBoostingClassifier

# We need richer features for uplift to show its strength.

# Pretend we have three pre-treatment covariates.

rng = np.random.default_rng(7)

n = 20_000

motivation = rng.normal(size=n)

usage_depth = rng.normal(size=n)

tenure_months = rng.integers(1, 48, size=n)

pr_call = 1 / (1 + np.exp(-(0.2 + 1.1 * motivation - 0.1 * usage_depth)))

T = (rng.uniform(size=n) < pr_call).astype(int)

# Heterogeneous effect: calls help low-tenure customers a lot,

# barely move high-tenure customers.

cate_true = 0.3 * (tenure_months < 6) + 0.05 * ((tenure_months >= 6) & (tenure_months < 18)) - 0.02 * (tenure_months >= 18)

pr_renew = np.clip(0.5 + 0.2 * motivation + 0.1 * usage_depth + cate_true * T, 0.01, 0.99)

Y = (rng.uniform(size=n) < pr_renew).astype(int)

X = pd.DataFrame({"motivation": motivation, "usage_depth": usage_depth, "tenure_months": tenure_months})

# Double Machine Learning causal forest.

cf = CausalForestDML(

model_y=GradientBoostingClassifier(max_depth=3),

model_t=GradientBoostingClassifier(max_depth=3),

discrete_treatment=True,

n_estimators=200,

min_samples_leaf=50,

random_state=0,

)

cf.fit(Y=Y, T=T, X=X)

# Per-customer treatment effect (CATE).

cate = cf.effect(X)

X["uplift"] = cate

top_decile = X.nlargest(len(X) // 10, "uplift")

print(f"Average predicted uplift, top 10%: {top_decile['uplift'].mean() * 100:.1f} pp")

print(f"Average predicted uplift, full population: {cate.mean() * 100:.1f} pp")Run that and you will see something like: the full population averages a 4 percentage point uplift, but the top 10% of persuadables average 18-22 percentage points. That is the actionable insight. You don't need to call 4,000 accounts. You need to call the top 1,200 and measure whether the lift materializes.

One detail that matters: the model is saying calls may hurt a subset of customers. Long-tenure accounts with a steady renewal probability might feel pestered by a check-in call and churn faster. This is not a bug. It is the whole point. Correlation analysis hides that negative effect. Uplift surfaces it so you can stop.

Wiring this to conversation signals with the Chanl SDK

Everything above assumed you already had the treatment flag, the outcome, and some covariates. In real CX, the confounders you need (motivation, intent, risk, expansion) are usually sitting inside your call transcripts. You just haven't extracted them as structured data yet.

This is what Signal does: it reads every conversation and extracts a structured record of intent, risk, expansion opportunities, and sentiment, so you can use them as the confounders in a causal model. Before Signal, the motivation proxy in the code above was imaginary. After Signal, it is a real column in your dataframe.

Here is how you'd pull Signal extractions per customer and feed them into DoWhy.

import os

import httpx

import pandas as pd

API = "https://api.chanl.ai"

HEADERS = {"Authorization": f"Bearer {os.environ['CHANL_API_KEY']}"}

# Pull conversations for the customer cohort you care about.

# The /api/v1/interactions endpoint paginates; keep it simple for the post.

resp = httpx.get(

f"{API}/api/v1/interactions",

headers=HEADERS,

params={"startDate": "2026-01-01", "endDate": "2026-03-31", "limit": 10000},

timeout=60,

)

resp.raise_for_status()

interactions = resp.json()["data"]["items"]

rows = []

for interaction in interactions:

# Signal extractions live on the interaction analysis subdoc.

signal = interaction.get("analysis", {}).get("signal", {})

rows.append({

"customer_id": interaction["customerId"],

"had_csm_call": int(

interaction.get("channel") == "voice"

and interaction.get("direction") == "outbound"

),

"risk_score": signal.get("riskScore", 0.0),

"expansion_score": signal.get("expansionScore", 0.0),

"sentiment": signal.get("sentimentScore", 0.0),

"pricing_mention": int("pricing" in signal.get("intents", [])),

})

conversations = pd.DataFrame(rows)

# Join to the CRM renewal outcome you already have.

renewals = pd.read_csv("renewals_2026_q1.csv") # customer_id, renewed

df = conversations.groupby("customer_id").agg({

"had_csm_call": "max",

"risk_score": "mean",

"expansion_score": "mean",

"sentiment": "mean",

"pricing_mention": "max",

}).join(renewals.set_index("customer_id"))

# Feed into DoWhy exactly as before, but with real confounders.

from dowhy import CausalModel

model = CausalModel(

data=df.reset_index(),

treatment="had_csm_call",

outcome="renewed",

common_causes=["risk_score", "expansion_score", "sentiment", "pricing_mention"],

)The important line is common_causes. These are the confounders you now have because Signal extracted them from conversation text. The analysis pipeline is the same. The quality of the answer is much higher because the DAG is no longer missing nodes.

There is a temptation here to treat things like "pricing mention" as a cause of churn. It usually isn't. It is a confounder. Customers who are price-sensitive both mention pricing AND churn more. Causal machinery lets you say that out loud instead of shipping a dashboard that confuses the two.

If you want to wire this into your TypeScript services (for example, a batch job that computes uplift scores and writes them back to your CRM), the Python job does the heavy lifting and the TS side is a thin orchestrator.

import { readFileSync } from "fs";

interface UpliftRow {

customer_id: string;

predicted_uplift: number;

uplift_decile: number;

}

const API = "https://api.chanl.ai";

const headers = {

Authorization: `Bearer ${process.env.CHANL_API_KEY!}`,

"Content-Type": "application/json",

};

async function run() {

// The Python job wrote a CSV. Read it and push per-customer uplift

// as a custom attribute on the customer record so CSMs can sort by it.

const raw = readFileSync("./out/uplift_scores.csv", "utf8");

const rows: UpliftRow[] = raw

.trim()

.split("\n")

.slice(1)

.map((line) => {

const [customer_id, predicted_uplift, uplift_decile] = line.split(",");

return {

customer_id,

predicted_uplift: Number(predicted_uplift),

uplift_decile: Number(uplift_decile),

};

});

for (const row of rows) {

const res = await fetch(`${API}/api/v1/customers/${row.customer_id}`, {

method: "PUT",

headers,

body: JSON.stringify({

customAttributes: {

uplift_score: row.predicted_uplift,

uplift_decile: row.uplift_decile,

},

}),

});

if (!res.ok) {

console.warn(`Customer ${row.customer_id} failed: ${res.status}`);

}

}

console.log(`Wrote uplift scores for ${rows.length} customers`);

}

run().catch((err) => {

console.error(err);

process.exit(1);

});The TypeScript side is boring, and that's the point. The causal math lives in Python where the libraries are mature: DoWhy, EconML, Uber's CausalML, CausalNex, and DoubleML if you want cross-validated DML without writing it yourself. If you are philosophically fancy, Meta's Bean Machine leans into probabilistic programming for the same goal. Results flow back into the systems CSMs actually use. Pair this with Analytics to track whether the predicted uplift deciles actually renew at the rates the model said they would. If decile 10 doesn't outperform decile 1, the model is broken and no dashboard will save you.

Where does causal inference break in practice?

Causal inference fails when its assumptions fail, and the assumptions are easy to break without noticing. The five failure modes below cover roughly all the ways a production causal estimate goes wrong: unmeasured confounders, lack of overlap, SUTVA violations, small samples, and refutation theater. Here they are in the order you'll hit them.

Unmeasured confounders. If something drives both treatment and outcome and you didn't measure it, your estimate is biased. There is no fix that doesn't involve going back and measuring it. DoWhy's add_unobserved_common_cause refutation tells you how sensitive your answer is, which is the closest thing to an insurance policy.

Positivity violations (lack of overlap). If certain types of customers never get called, matching can't find comparisons for them. Their causal effect is unidentified. Check the distribution of propensity scores across treated and control. If the tails don't overlap, you can't estimate effects in those tails honestly.

SUTVA (stable unit treatment value assumption) violations. If calling Customer A changes whether Customer B renews (because they talk on Slack, because your CSM learned from A's call and handled B differently), the independence assumption breaks. Network effects between customers are the most common case.

Small samples and heterogeneity. Causal forests want thousands of samples before the per-customer effect estimates stabilize. On 800 customers, the heterogeneous effect will mostly be noise. Check confidence intervals on CATE, not just the point estimates. If the intervals straddle zero for most customers, the model is telling you "I don't know."

Refutation theater. It is possible to run DoWhy refutations, have them pass, and still be wrong because your DAG omits a critical node. Refutations don't validate the graph. They validate the math given the graph. The graph is always a model decision. Get a colleague to argue with it.

These limits are why good causal analysis ships with caveats and confidence intervals instead of a headline percentage. If someone hands you a one-number result without a graph, a refutation, and an overlap plot, it isn't a causal analysis. It's a regression in a fake mustache.

The end of vibe-based CX analytics

CX analytics has lived in the correlation era for a decade. "Customers who mention pricing churn" (often wrong, because price-sensitive customers both mention pricing and churn). "Customers who use feature X stay" (often selection, because customers who would stay anyway use everything). "NPS predicts retention" (reverse causation as much as anything).

None of these insights are useless. They're good for discovery, bad for action. The next step is to take the signals you already extract from conversations (intent, risk, expansion, sentiment) and treat them as confounders, treatments, or outcomes in a properly specified causal graph. That's how you move from "we have a ton of data" to "we act on it and the actions work."

The pipeline isn't exotic. Propensity matching is ten lines of scikit-learn. DoWhy wraps twenty lines around a principled workflow. EconML's CausalForestDML drops into the Python job you already run. The tools are easy. What's hard is drawing a DAG you'd defend in front of a skeptical colleague, and admitting when a variable you love is actually a confounder wearing a KPI costume.

Back to the CSM team and their 31% regression. If they'd run the matched analysis first, the deck would have shown 4 points of lift, and someone would have asked the right question: which 1,200 accounts are we calling, not which 4,000? The program would have cost a quarter of the budget and actually moved retention. That's the gap causal inference closes.

If you want a starting point on the evaluation side, our earlier piece on AI agent benchmarks and production performance walks through the same skepticism applied to agent quality, and turning analytics into agent improvements shows how to close the loop from signal to action. The causal layer is what sits between them. Once you have it, the signals from Scorecards stop being diagnostic noise and start being inputs to a decision process that actually moves the business.

Stop shipping correlation dashboards.

Signal extracts the intents, risks, and outcomes you need to run real causal analysis on every conversation. Wire it up in an afternoon.

Try SignalSources

- DoWhy: An end-to-end library for causal inference (PyWhy / Microsoft)

- DoWhy documentation: making causal inference easy

- DoWhy on PyPI

- EconML (py-why): heterogeneous treatment effects and double machine learning

- EconML / CausalML KDD 2021 Tutorial

- Uber CausalML: Uplift modeling and causal inference with machine learning

- Pearl, The Foundations of Causal Inference

- The Book of Why (Pearl and Mackenzie)

- Do-Calculus adventures: the three rules and the backdoor adjustment formula (Andrew Heiss)

- Back-Door and Front-Door Criteria and do-Calculus (USC notes)

- Causal Inference for the Brave and True: Propensity Score chapter (Facure)

- Propensity Score Matching practical guide (Built In)

- Causal Inference with Python: Propensity Score Matching (Towards Data Science)

- Gutierrez and Gerardy: Causal Inference and Uplift Modeling literature review

- Beyond Churn Models: Causal Inference and Uplift Modeling for Retention

- Uplift Modeling: Maximizing Marketing ROI Through Causal Inference

- Dynamic Marketing Uplift Modeling with Causal Forests and Deep RL (2025, MDPI)

- How applied researchers use the causal forest: a methodological review (Rehill 2025)

- Synthetic Control Method (Wikipedia)

- Abadie: Synthetic Controls, Methods and Practice (NBER)

- Using Synthetic Controls: Feasibility and Data Requirements (Abadie, JEL)

Co-founder

Building the platform for AI agents at Chanl — tools, testing, and observability for customer experience.

Learn Agentic AI

Weekly. Patterns for shipping agents that work — MCP, scorecards, regression tests, prompts, model comparisons.