Your agent replies with a polite "Got it, I've refunded order #8842." That is 11 words. Roughly 14 tokens.

The API bill for that turn can easily show several thousand output tokens.

The rest are reasoning tokens. You cannot see them. You cannot log them as text. They count against your output budget. They bill at the output rate. And they are now the single line item that surprises teams the most when they move an agent from GPT-4o to GPT-5 or from Claude Sonnet without thinking to Claude Opus 4.7 with extended thinking.

This post is about that shadow cost: what it is, how to measure it, how to cap it, and why "reasoning tokens" is the budget category nobody was tracking a year ago.

TL;DR: The Numbers That Matter

- Reasoning tokens bill as output tokens at output rates. GPT-5 thinking: $10/MTok. Claude Opus 4.7 extended thinking: $25/MTok. Gemini 3.1 Pro thinking: billed as output.

- Complex turns can generate 3x to 5x more reasoning tokens than visible output. Opus documentation cites that range for math and multi-file code review. Ambiguous tool-calling turns in CX agents can push the ratio higher still.

- Reasoning tokens consume max_output_tokens. GPT-5 has a 128,000 token output ceiling. A high-effort reasoning burst can eat 80% of it before the visible reply starts.

reasoning_effortis the single most expensive knob on the API. Community instrumentation across the five levels (minimal, low, medium, high, xhigh) shows close to a 10x swing in generated reasoning volume for the same prompt.- Most teams measure total output tokens, not the reasoning/visible split. That is why the bill surprises them.

What Are Reasoning Tokens, Really?

Reasoning-enabled models run an internal pass before producing their visible answer. On OpenAI's o-series and GPT-5, the model generates a private chain of thought into a reasoning channel that is not returned in the response body. On Claude extended thinking, the model produces thinking blocks that can be returned or omitted. On Gemini 3.1 Pro, thinking tokens are reported in the usage field but not the content field.

The common properties across all three providers:

- The tokens are generated by the model on your behalf.

- The tokens are billed at the output token rate.

- The tokens consume space in the output token budget (

max_output_tokenson OpenAI,max_tokenson Claude). - You cannot see the text. You can only see the count.

That fourth point is where the operational pain starts. Every other category of token is inspectable: system prompt, tool schemas, conversation history, RAG chunks, memory injection. You can open your logs and read what got billed. Reasoning tokens are the first line item in agent infrastructure that bills without producing readable artifacts.

The Billing Math

Here is the shape of a GPT-5 usage object on the Chat Completions API (the Responses API uses the same fields under output_tokens_details):

{

"prompt_tokens": 3420,

"completion_tokens": 4823,

"total_tokens": 8243,

"completion_tokens_details": {

"reasoning_tokens": 4809,

"accepted_prediction_tokens": 14

}

}completion_tokens is what you bill on. It includes reasoning. In this example the visible reply is 14 tokens and the reasoning pass consumed 4,809. Same rate, same bucket, no way to see the difference unless you read the nested field.

At GPT-5 thinking rates ($10/MTok output), that turn costs:

- Visible reply: 14 tokens × $10/MTok = $0.00014

- Reasoning: 4,809 tokens × $10/MTok = $0.04809

- Roughly 343x of the cost is invisible.

Now extrapolate. A CX agent doing 500 conversations per day, 8 turns per conversation, average 3,000 reasoning tokens per turn:

- 500 × 8 × 3,000 = 12,000,000 reasoning tokens/day

- At $10/MTok = $120/day in reasoning alone = $3,600/month

That is on top of input, on top of visible output, on top of caching. And it is a line item that did not exist in your GPT-4o budget because GPT-4o has no reasoning tokens.

Claude extended thinking math runs similar. At Opus 4.7's $25/MTok output rate, a turn with 500 visible output and 2,000 thinking tokens costs $0.0625 versus $0.0125 without thinking. That is a 5x markup for the same visible answer, taken directly from Anthropic's own pricing examples.

The Output-Budget Trap

This is the part that bites teams in production.

GPT-5 has a 128,000 token output limit. That limit covers reasoning + visible output combined. If you set reasoning_effort: "high" and ask a hard question, the model can burn 80,000 reasoning tokens before writing a single visible word. You have 48,000 output tokens left. For most CX responses that is fine. For long-form content generation, call summarization, or structured data extraction, you will hit the ceiling and get a silent truncation.

What silent truncation looks like in a logs dashboard:

- Agent reply is shorter than the system prompt suggested.

- The

finish_reasonfield is"length"not"stop". - Reasoning token count is in five digits.

- No error thrown.

Claude extended thinking behaves the same way. Set max_tokens: 8000 with thinking enabled, burn 7,000 on thinking, you get up to 1,000 of visible reply before Anthropic stops generating. If your system prompt tells the agent to return a JSON object, that JSON may be cut off mid-string. Downstream parsing silently fails.

The fix is not to crank max_output_tokens higher. The fix is to cap reasoning separately.

Measurement: The Fields You Actually Need to Log

If you take one thing from this post, make it this list. These are the fields that should be in every agent request log, per turn, for every provider.

| Field | OpenAI (GPT-5) | Anthropic (Claude) | What to do with it |

|---|---|---|---|

| Visible output tokens | completion_tokens minus reasoning_tokens | output_tokens minus thinking tokens | Compare to reply length to catch truncation |

| Reasoning tokens | completion_tokens_details.reasoning_tokens (Chat Completions) or output_tokens_details.reasoning_tokens (Responses API) | Thinking block token count | Track the ratio to visible output |

| Max output budget | max_completion_tokens set at request | max_tokens set at request | Alert when utilization > 80% |

| Finish reason | finish_reason | stop_reason | length or max_tokens = truncation |

| Reasoning effort | Your reasoning_effort param | Your thinking.budget_tokens param | Correlate with reasoning volume |

One derived metric I put on every production dashboard: reasoning ratio = reasoning_tokens / visible_output_tokens. Above 5:1 on a single turn is a yellow flag. Above 20:1 is a red flag and usually means reasoning_effort is too high for the task.

A second derived metric: reasoning cost share = reasoning_token_cost / total_token_cost. When this crosses 40% across a population of turns, you are paying for thinking the agent does not need.

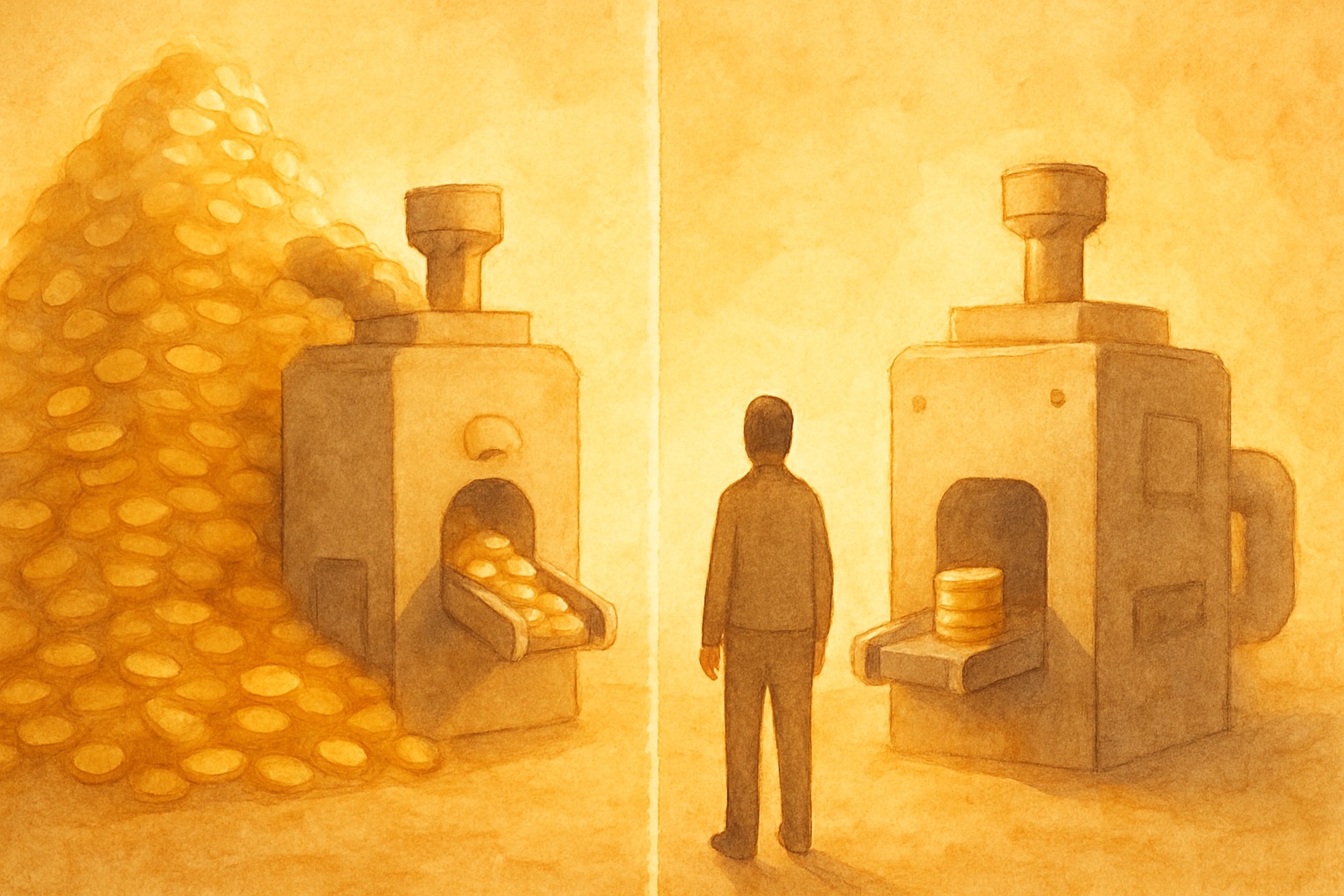

Both metrics belong next to your usual latency and token-per-turn charts. Here is roughly what that looks like in practice:

Caps and Effort Controls Across Providers

Every reasoning provider now ships a separate reasoning cap. This is the single most under-used feature in the agent builder's toolkit.

OpenAI GPT-5 exposes reasoning_effort with model-dependent values: none, minimal, low, medium, high, and xhigh. The exact set varies by model (GPT-5 supports minimal through high; GPT-5.2 and later add none; Codex variants add xhigh). Published benchmarks and community measurements put the reasoning-volume gap between minimal and the top setting in roughly a 10x range for the same prompt.

Defaults matter here. GPT-5.4 defaults to none (no reasoning). Older GPT-5 models default to medium. If you did not explicitly set it on an older model, you are on medium and paying a material multiple of what minimal would cost.

Anthropic Claude extended thinking exposes thinking.budget_tokens, a hard cap on the thinking budget in tokens (minimum 1,024). Set it to around 2,000 for simple CX turns. Set it to 16,000 for research or multi-step planning. Anthropic stops thinking when the cap is hit and proceeds to the visible response. Newer Claude models also support adaptive thinking, which dynamically chooses the budget, so check the current Claude docs before hard-coding a value for the latest model.

Google Gemini 3.1 Pro exposes a thinking mode toggle and a token budget similar to Anthropic. Gemini reports thinking tokens separately in the usage object.

The pattern to adopt:

// Simple CX turn: no thinking needed

const refund = await openai.responses.create({

model: "gpt-5",

reasoning_effort: "minimal",

input: [{ role: "user", content: userMessage }],

});

// Complex planning turn: budget capped

const plan = await anthropic.messages.create({

model: "claude-opus-4-7",

max_tokens: 8000,

thinking: { type: "enabled", budget_tokens: 4000 },

messages: [{ role: "user", content: planPrompt }],

});The defaults are aggressive because they are optimized for benchmark scores. Your agent is not a benchmark. It handles refunds, order lookups, and appointment changes. Override the default.

Budget Math per Turn for a CX Agent

Here is what a per-turn reasoning budget looks like for a production customer-experience agent. These numbers are directional. Your own ratios will vary. But they show the shape of a realistic budget.

| Turn type | Visible output budget | Reasoning budget | Reasoning effort | Why |

|---|---|---|---|---|

| Intent classification | 50 tokens | 0 tokens | minimal / disabled | Routing is a lookup, not a reasoning task |

| FAQ answer | 200 tokens | 500 tokens | low | Some retrieval reasoning, short answer |

| Order lookup + reply | 300 tokens | 1,000 tokens | low | Tool call, simple synthesis |

| Refund decision (policy check) | 400 tokens | 4,000 tokens | medium | Real policy reasoning matters |

| Plan-and-execute supervisor | 1,000 tokens | 8,000 tokens | high | Multi-step plans justify the cost |

| Worker tool-call execution | 200 tokens | 500 tokens | minimal | The plan already did the thinking |

Notice the split between supervisor and worker. The planner pays for reasoning. The executor does not. This is the single biggest savings lever in plan-and-execute agent architectures and it composes naturally with model routing: supervisor on GPT-5 high-reasoning, workers on GPT-5 mini with reasoning disabled.

A CX agent built this way, handling the same 500 conversations per day as the example above, typically lands at $800 to $1,400/month in reasoning spend, versus the $3,600 you get by leaving everything on default medium reasoning.

Interactive Thinking Changes the Math

The newer class of interactive thinking models, where the model can stream visible reply tokens while still reasoning, changes when reasoning cost shows up but not how much it costs. You still bill the full reasoning volume at output rates. What changes is latency. The user sees the first visible token sooner, which makes the agent feel faster, but the final invoice is the same.

For CX this matters because latency perception drives transfer-to-human rates. A 4-second reasoning pause with no visible output reads as a dead agent. The same 4-second reasoning with tokens streaming reads as a thoughtful agent. Same cost. Different NPS.

Do not confuse the UX improvement with a cost improvement. Teams that deploy interactive thinking, see better CSAT, and then forget to cap reasoning_effort because "it feels fast now" still get the same bill. The invoice does not care about perceived latency.

Where Should Reasoning-Token Data Live in Your Monitoring Stack?

Reasoning token data needs to live in three places:

- Per-turn logs. Log

reasoning_tokens,visible_output_tokens,max_output_tokens,finish_reason, and the effort/budget parameter alongside every turn. This is non-negotiable. - Agent-level dashboards. Roll up reasoning ratio and reasoning cost share across all turns for each agent. Sort agents by reasoning cost descending. You will be surprised which ones top the list.

- Alerts. Alert when an agent's reasoning cost share exceeds a threshold (e.g., 50%) over a rolling window. Alert when

finish_reason: "length"occurs more than 1% of the time. Both are symptoms of reasoning budget misconfiguration.

For teams on Chanl Monitor, this is where we are heading: reasoning tokens as a separate column on every interaction, and Scorecards that can flag turns where the reasoning ratio exceeds your configured ceiling. The point is not which tool you use. The point is that "output tokens" as a single number is no longer enough. You need the split.

What to Do Monday Morning

If you run a production agent on a reasoning-enabled model, do these five things this week:

- Log

reasoning_tokens(OpenAI) or thinking token counts (Claude/Gemini) as a separate field on every turn. Most teams are not doing this yet. - Compute the reasoning ratio across the last 10,000 turns. If it is above 3:1, you have optimization headroom.

- Audit your

reasoning_effort/budget_tokenssettings. Most defaults are too high for CX. - Split supervisor and worker calls. Put reasoning on the supervisor only.

- Set alerts on

finish_reason: "length". Silent truncation is the worst failure mode because users see a broken reply, not an error.

The shift from GPT-4o to GPT-5 and from Sonnet without thinking to Opus with extended thinking is not a 2x price bump like the headline numbers suggest. Depending on how much thinking your agent does, it is a 3x to 10x bump on the reasoning-heavy turns. Teams that budget for this catch it at deploy time. Teams that do not catch it on the first invoice.

Treat reasoning tokens like you treat any other variable cost: measure, cap, and budget. The infrastructure to do it exists on every major provider. What is missing is the habit.

Monitor reasoning tokens across every agent turn

Chanl logs reasoning, visible output, and cap utilization per turn, so you catch the shadow cost before it lands on the invoice.

See Chanl MonitorSources

- OpenAI — Reasoning models API guide

- OpenAI Developer Community — Reasoning tokens hidden price question

- OpenAI Developer Community — GPT-5 mini output token billing confusion

- Anthropic — Claude API Pricing

- Anthropic — Extended thinking

- Simon Willison — GPT-5 model card and pricing

- Azure OpenAI — Reasoning models

- OpenRouter — Reasoning tokens best practices

- Finout — Anthropic API pricing 2026

- Apiyi — Gemini 3.1 Pro thinking tokens explained

- Felix Horvat — Reasoning tokens tested on 5 LLMs via OpenRouter

- Vellum — LLM Leaderboard 2026

Co-founder

Building the platform for AI agents at Chanl — tools, testing, and observability for customer experience.

Learn Agentic AI

Weekly. Patterns for shipping agents that work — MCP, scorecards, regression tests, prompts, model comparisons.