Articles tagged “ai-agents”

79 articles

Your Agent Has Observability. It Doesn't Have Measurement.

89% of AI teams added observability. 52% added evals. But only 31% can say whether their agent is getting better or worse. Here's the difference between watching your agent and actually measuring it.

Build a Nurture Agent That Decides Not to Send

Most nurture sequences are 14 emails on a calendar. The fix is an event-triggered agent whose most valuable action is wait. Here's the worker.

Your CX Agent Doesn't Care Who Won SWE-Bench. Here's Who Actually Wins.

SWE-bench crowns a coding king. Customer experience agents answer to a different benchmark, tau-bench, and the rankings flip. The head-to-head that actually predicts production reliability.

Stop Using SWE-Bench to Pick Your CX Model

SWE-Bench scores 85% or 23% depending on the harness, and neither measures customer experience. Why tau-bench, tau2-bench, and pass^k matter for CX agents.

Stop Building Dashboards. Start Shipping Signal.

Dashboards tell VPs what happened last quarter. Signal tells them which account to call today, and why. How CX is exiting the post-dashboard era in 2026.

Your Conversations Are Already CRM Data. Here's How to Use Them.

Every customer call carries churn risk, expansion intent, and compliance signal. Most teams toss it. Here's how to turn conversations into live CRM data.

How Much Testing Is Enough for Your AI Agent?

Code coverage doesn't apply to AI agents. Here's a framework for thinking about evaluation coverage: how many scenarios you need, what distribution to target, and how to know when you've tested enough.

Your LLM-as-judge may be highly biased

LLM-as-Judge has 12 documented biases. Here are 6 evaluation methods production teams actually use instead, with code examples and patterns.

7 FastMCP mistakes that break your agent in production

FastMCP servers that work locally often fail at scale. Seven common mistakes, from missing annotations to monolithic tool sets, and how to fix each one.

GDPR says delete. EU AI Act says keep. Now what?

GDPR requires deletion on request. The EU AI Act requires 10-year audit trails. Here's how to architect agent memory that satisfies both simultaneously.

We open-sourced our AI agent testing engine

chanl-eval is an open-source engine for stress-testing AI agents with simulated conversations, adaptive personas, and per-criteria scorecards. MIT licensed.

Claude Code subagents and the orchestrator pattern

How to structure Claude Code subagents, write dispatch prompts, and coordinate parallel work across services, SDKs, and frontends in a monorepo.

Graph memory for AI agents: when vector search isn't enough

Build graph memory for AI agents in TypeScript and Python. Extract entities, track relationships over time, and compare Mem0, Zep, and Letta in production.

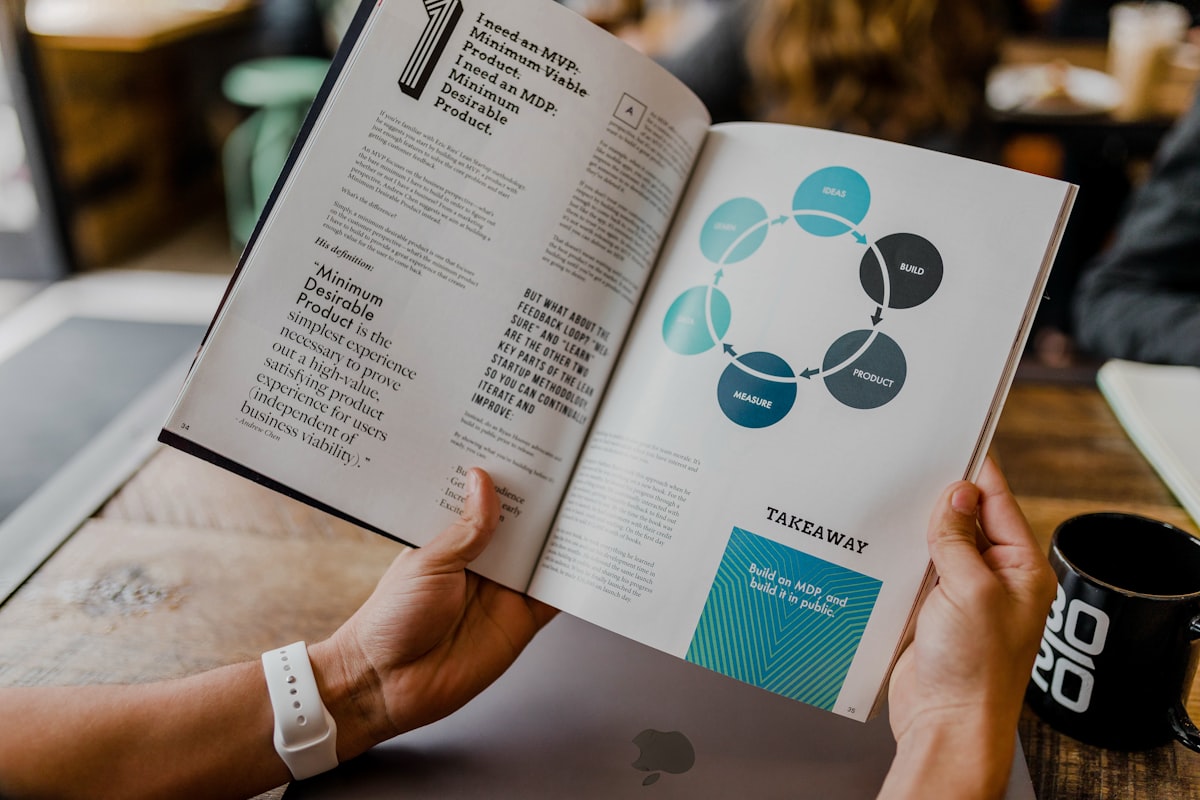

AI Agent Frameworks Compared: Which Ones Ship?

An honest comparison of 9 AI agent frameworks (LangGraph, CrewAI, Vercel AI SDK, Mastra, OpenAI Agents SDK, Google ADK, Microsoft Agent Framework, Pydantic AI, AutoGen) based on what developers actually ship to production in 2026.

Build an AI Agent Observability Pipeline from Scratch

Build a production observability pipeline for AI agents using TypeScript and the Chanl SDK. Covers metrics, traces, quality scoring, drift detection, and alerting.

Your AI Agent's Context Window Is Already Half Full

System prompts, tool schemas, MCP descriptions, memory injection, conversation history. They all eat tokens before the user says a word. Learn where your context budget goes and how to manage it.

Agent Drift: Why Your AI Gets Worse the Longer It Runs

AI agents silently degrade over long conversations. Research quantifies three types of drift and shows why point-in-time evals miss them entirely.

Your Agent Completed the Task. It Also Forgot 87% of What It Knew.

Task completion hides a silent failure: agents forget 87% of stored knowledge under complexity. New research reveals why standard evals miss this entirely.

NIST Red-Teamed 13 Frontier Models. All of Them Failed.

NIST ran 250K+ attacks against every frontier model. None survived. Here's what the results mean for teams shipping AI agents to production today.

Your Agent Is Getting Smarter. It's Not Getting More Reliable.

Reliability improves at half the rate of accuracy. Three 85%+ tools combine to just 74%. Here's the math, the research, and the testing protocols that close the gap.

The Auto Shop That Knows Your Car Better Than You Do

Build an AI phone agent for auto repair shops that answers calls, quotes brake jobs, remembers every vehicle, and sends maintenance reminders.

A Dental Receptionist That Works Nights and Weekends

Build an AI receptionist for dental clinics that answers insurance questions, books appointments, and captures after-hours leads. Five clients pay $1,500/month.

50 Tools, Zero Memory. The Biggest Gap in AI Agents Today

AI agents can call 50 APIs but can't remember what you said yesterday. The tool layer is years ahead of the memory layer, and customers are paying the price.

Function Calling: Build a Multi-Tool AI Agent from Scratch

Build a multi-tool AI agent from scratch using function calling across OpenAI, Anthropic, and Google. Runnable TypeScript and Python code, validation with Zod and Pydantic, and production hardening patterns.

The RAG You Built Last Year Is Already Outdated

RAG has branched into 5 distinct architectures: Self-RAG, Corrective RAG, Adaptive RAG, GraphRAG, and Agentic RAG. Here's when to use each and how to choose.

Your RAG Returns Wrong Answers. Upgrading the Model Won't Help

Most RAG quality problems are retrieval problems, not model problems. Bad chunking, wrong embeddings, and missing re-ranking cause more hallucinations than model capability gaps.

Why MCP Exists: Tool Calling Shouldn't Need Adapter Code

OpenAI, Anthropic, and Google all implement function calling differently. MCP is emerging as the standard that saves developers from writing adapter code for every provider.

Every Contact Center Job Is Changing. Here's What That Actually Looks Like

AI isn't eliminating contact center roles. It's hollowing out the repetitive parts and elevating the rest. Here's what human-AI collaboration actually looks like on the floor, and what it means for how you build and manage your team.

Customers Don't Trust AI Voices. Here's What Actually Changes That

More than half of users instinctively distrust AI voices, not because the technology is broken, but because most deployments hide the wrong things and reveal nothing useful. Here's what transparency and UX actually do to close the gap.

Your RAG Pipeline Is Answering the Wrong Question

Naive RAG scores 42% on multi-hop questions. Agentic RAG hits 94.5%. The difference: letting the agent decide what to retrieve, when, and whether the results are good enough. Build both in TypeScript and Python.

Your Agent Aced the Benchmark. Production Disagreed.

We scored 92% on GAIA. Production CSAT: 64%. Here's which AI agent benchmarks actually predict deployed performance, why most don't, and what to measure instead.

Your Agent Remembers Everything Except What Matters

ICLR 2026 MemAgents research reveals when AI agents need episodic memory (what happened) vs semantic memory (what's true). Covers MAGMA, Mem0, AdaMem papers, comparison of Mem0 vs Letta vs Zep, and architecture patterns with TypeScript examples.

A 7B Domain Model Beat Everything We Tried

Domain-specific language models are beating trillion-parameter generalists on vertical tasks. Here's when a 7B model is the right call, how the training pipeline works, and what production teams are shipping today.

The AI Agent Dashboard of 2026: What Teams Actually Need to See

Traditional dashboards tell you what went wrong yesterday. The AI agent dashboards teams actually need deliver feedback in the moment, during the call, not after it. Here's what that looks like in practice.

A 1B Model Just Matched the 70B. Here's How.

How to distill frontier LLMs into small, cheap models that retain 98% accuracy on agent tasks. The teacher-student pattern, NVIDIA's data flywheel, and the Plan-and-Execute architecture that cuts agent costs by 90%.

The Multi-Agent Pattern That Actually Works in Production

Gartner reports a 1,445% surge in multi-agent system inquiries. Here are the orchestration patterns that actually work when real customers call -- and why most teams pick the wrong one.

Stop Reacting to Bad Calls. Catch Problems Before Customers Do

By the time a customer complains, you've already lost. Real-time analytics lets AI agent teams catch failing conversations mid-flight, not in the post-mortem. Here's how to build a proactive monitoring stack that prevents pain instead of documenting it.

Your AI Agent Has No Guardrails

Air Canada honored a refund its chatbot hallucinated. DPD's bot cursed at customers on camera. One e-commerce agent approved $2.3M in unauthorized refunds at 2:47 AM. Here is the five-layer guardrail architecture that prevents all three.

Every Tool Is an Injection Surface

Prompt injection moved from chat to tool calls. Anthropic, OpenAI, and Arcjet shipped defenses in the same month. Here's what changed, what works, and what your agent architecture needs now.

Part 1: Claude's 7 Extension Points — The Mental Model

CLAUDE.md, Skills, Hooks, MCP Servers, Connectors, Claude Apps, Plugins — Claude's extension ecosystem is powerful but confusing. Here's the mental model that makes sense of all 7.

Part 2: CLAUDE.md, Hooks, and Skills — Three Layers

CLAUDE.md sets conventions. Hooks enforce them. Skills teach workflows. Understanding these three layers — and their reliability spectrum — is the key to a Claude Code setup that actually works.

Part 3: MCP Servers vs. Connectors vs. Apps

All Claude Apps are Connectors. All Connectors are MCP Servers. Understanding this hierarchy — and when to build vs. use managed integrations — saves weeks of unnecessary engineering.

Part 4: All 7 Extension Points in One Production Codebase

50+ skills, multiple MCP servers, scoped rules, safety hooks — here's how all 7 Claude extension points compose in a real NestJS monorepo with 17 projects. What works, what fights, and what we'd do differently.

AI Agents Are Great. Until They're Not. When to Put Humans Back in Control

AI agents can handle 80% of your customer interactions with no problem. The other 20% is where your reputation is made or broken. Here's how to design escalation that actually works.

Zero-Shot or Zero Chance? How AI Agents Handle Calls They've Never Seen Before

When a customer calls with a request your AI agent has never encountered, what actually happens? We break down the mechanics of zero-shot handling, and how to test for it before it fails in production.

Your Agent Passed Every Dev Test. Here's Why It'll Fail in Production

A 4-layer testing framework for AI agents (unit, integration, performance, and chaos testing) so your agent survives real customers, not just controlled demos.

MCP Is Now the Industry Standard for AI Agent Integrations. Here's What That Means

MCP standardizes how AI agents connect to tools and data, replacing fragile, proprietary integrations with a universal protocol. Here's what it means for your agents.

Claude 4.6 broke our production agent in two hours — here's what's worth the migration

A practical developer guide to Claude 4.6 — adaptive thinking, 1M context, compaction API, tool search, and structured outputs. Real code examples in TypeScript and Python for building production AI agents.

71% of organizations aren't prepared to secure their AI agents' tools

MCP gives AI agents autonomous access to real systems — and introduces attack vectors that traditional security can't see. A technical breakdown of tool poisoning, rug pulls, cross-server shadowing, and the defense framework production teams need now.

MCP Streamable HTTP: The Transport Layer That Makes AI Agents Production-Ready

MCP's Streamable HTTP transport replaced the original SSE transport to fix critical production gaps. This guide covers what changed, why it matters, and how to implement it in TypeScript with code examples.

Conversational AI vs. Agentic AI: What's the Difference, and Why It Matters for CX Teams

Conversational AI follows scripts. Agentic AI pursues goals. Here's the exact difference, with a side-by-side comparison and a practical guide to choosing the right approach for customer experience.

Your agent has 30 tools and no idea when to use them

MCP tools give agents external capabilities. Skills give agents behavioral expertise. Learn the architecture of both, build them in TypeScript, and understand when to use each — and when you need both.

The Death of the Decision Tree: Why Rule-Based Bots Can't Survive Real Conversations

Scripted voicebots break the moment customers go off-script, which is most of the time. Here's exactly how decision trees fail, what agentic AI changes at the architecture level, and how to make the transition without a catastrophic cutover.

AI Agent Memory: From Session Context to Long-Term Knowledge

Build AI agent memory systems from scratch in TypeScript. Covers memory types (session, episodic, semantic, procedural), architectures (buffer, summary, vector retrieval), RAG intersection, and privacy-first design.

Build your own AI agent memory system — what breaks when real users show up?

Build a complete memory system for customer-facing AI agents — session context, persistent recall, semantic search. Then learn what breaks when real customers start returning.

Build your own AI agent tool system — what breaks when you add the 20th tool?

Build a complete tool system for customer-facing AI agents from scratch — registry, execution, auth, monitoring. Then learn what breaks when real customers start calling.

Call Logs Aren't Just Records. They're Your Best Product Feedback Loop

Most teams treat call logs as a compliance archive. The teams winning with AI agents treat them as a real-time signal about what's working, what's breaking, and what customers actually want.

Multi-Agent AI Systems: Build an Agent Orchestrator Without a Framework

Build a multi-agent system from scratch — delegation, planning loops, and inter-agent communication — before reaching for LangGraph or CrewAI.

Voice AI Escaped the Call Center. Here's Where It Landed.

From $50K M&A due diligence to 9 million burger orders, voice AI agents are breaking into verticals nobody predicted. Here's what developers need to know.

Your AI agent remembers everything — should your customers be worried?

Privacy-first memory design for AI agents: what to store, what to forget, how to give customers control, and how to stay compliant across GDPR, HIPAA, and multi-channel deployments.

Prompt Engineering Is Dead. Long Live Prompt Management.

Why production AI teams need version control, A/B testing, and rollback for prompts — not just clever writing. The craft has changed.

Scenario Testing: The QA Strategy That Catches What Unit Tests Miss

Discover how synthetic test conversations catch edge cases that unit tests miss. Personas, adversarial scenarios, and regression testing for AI agents.

Scorecards vs. Vibes: How to Actually Measure AI Agent Quality

Most teams 'feel' their AI agent is good. Here's how to build structured scoring with rubrics, automated grading, and regression detection that holds up.

Edge AI for Voice Agents: Fix Latency and Privacy at the Source

How edge AI eliminates 50-200ms of latency and entire classes of privacy risks for voice agents — with hybrid architecture patterns and TypeScript examples.

Voice AI Can Read Your Mood — Here's What That Changes

How emotion-aware voice AI detects customer sentiment in real time, adapts responses, and cuts escalations by 25-40% — plus the ethics you can't ignore.

Voice Commerce Hit $50B. Here's How Amazon, Google, and Apple Are Splitting It

Analyze the explosive growth of voice commerce and how Amazon, Google, and Apple are competing to dominate voice-activated shopping experiences.

Smarter Escalation: When Should Voice AI Refuse to Answer?

Industry research shows that 60-65% of enterprises struggle with AI escalation decisions, leading to customer frustration and compliance risks. Discover when voice AI should refuse to answer and how to build smarter escalation frameworks.

Agentic AI Liability: Who's Responsible for What When Things Go Wrong?

Industry research shows that 80-85% of enterprises lack clear liability frameworks for agentic AI failures. Discover how to establish responsibility structures that protect your organization while enabling AI innovation.

70% of Enterprises Are Ripping Out Their IVRs. Here's Why, and What Replaces Them

Industry research shows that 70-75% of enterprises are phasing out IVRs in favor of conversational AI. Here's how to build transitions that preserve customer experience while modernizing operations.

Conversation as a Service: Will the Next SaaS Giants Be Voice-First?

Voice-first SaaS is generating real revenue but not in the way most people predicted. Here's an honest look at what's working, what's hype, and whether conversation platforms will produce the next generation of software giants.

How LLMs Changed Agent Training Forever: From Writing Rules to Writing Prompts

LLMs didn't just improve agent training. They changed the entire discipline. Here's what actually shifted, what works in production, and what the industry still gets wrong.

Prompt engineering vs. context engineering: What's the next step for voice AI?

While prompt engineering focuses on perfecting inputs, context engineering optimizes the entire conversation environment. Discover why context engineering is becoming the key differentiator in voice AI.

Digital Twins for AI Agents: Simulate Before You Ship

Build digital twins that test your AI agent against thousands of synthetic customers. Architecture, TypeScript code, and the patterns that catch failures.

Fail Fast, Speak Fast: Why Iteration Speed Beats Initial Accuracy for AI Agents

The teams winning with AI agents are not the ones with the best v1. They are the ones who improve fastest after launch. Here's how to build a rapid iteration engine for conversational AI.

What HIPAA Taught Us About AI Security (And It Applies to Every Industry)

Healthcare didn't choose to build the most rigorous data security framework in existence. It was forced to. Three decades later, that framework turns out to be the best blueprint for securing AI agents in any industry.

Can AI learn to apologize? The uncomfortable truth about synthetic empathy

Industry research shows that 55-60% of enterprises are exploring synthetic empathy in AI systems. Discover the ethical implications and practical applications of AI emotional intelligence.

The Voice AI Quality Crisis: Why Most Deployments Fail in Production

Most voice AI deployments fail in production despite passing lab tests. Real data on why the gap exists, what it costs, and how to close it.

Why 75% of AI chatbots fail complex issues — and what the other 25% do differently

Industry research reveals 75% of customers believe chatbots struggle with complex issues. Learn why this happens and discover proven testing strategies to dramatically improve your AI agent performance.

The Human Touch: Why 90% of Customers Still Choose People Over AI Agents

Despite AI advances, 90% of customers prefer human agents for service. Discover what customers really want from AI interactions and how to bridge the trust gap through rigorous testing.

The Signal Briefing

One email a week. How leading CS, revenue, and AI teams are turning conversations into decisions. Benchmarks, playbooks, and what's working in production.