Learning AI Articles

32 articles · Page 2 of 3

Embeddings Turn Text Into Meaning. Here's the Math and the Code

What embeddings are, how similarity search works under the hood, and how to build a semantic search engine, from cosine similarity math to production vector databases.

Function Calling: Build a Multi-Tool AI Agent from Scratch

Build a multi-tool AI agent from scratch using function calling across OpenAI, Anthropic, and Google. Runnable TypeScript and Python code, validation with Zod and Pydantic, and production hardening patterns.

Your RAG Pipeline Is Answering the Wrong Question

Naive RAG scores 42% on multi-hop questions. Agentic RAG hits 94.5%. The difference: letting the agent decide what to retrieve, when, and whether the results are good enough. Build both in TypeScript and Python.

Context Engineering Is What Your Agent Actually Needs

Prompt engineering hits a wall with production AI agents. Context engineering fixes it. Build a full context pipeline with memory, RAG, history compression, and tool resolution.

A 7B Domain Model Beat Everything We Tried

Domain-specific language models are beating trillion-parameter generalists on vertical tasks. Here's when a 7B model is the right call, how the training pipeline works, and what production teams are shipping today.

Fine-Tune a 7B Model for $1,500 (Not $50,000)

Full fine-tuning costs $50K in H100s. QLoRA on an RTX 4090 costs $1,500. Learn how LoRA and QLoRA let you train only 0.1-1% of parameters with nearly identical results, with working code for fine-tuning models that understand your agent's tool schemas.

A 1B Model Just Matched the 70B. Here's How.

How to distill frontier LLMs into small, cheap models that retain 98% accuracy on agent tasks. The teacher-student pattern, NVIDIA's data flywheel, and the Plan-and-Execute architecture that cuts agent costs by 90%.

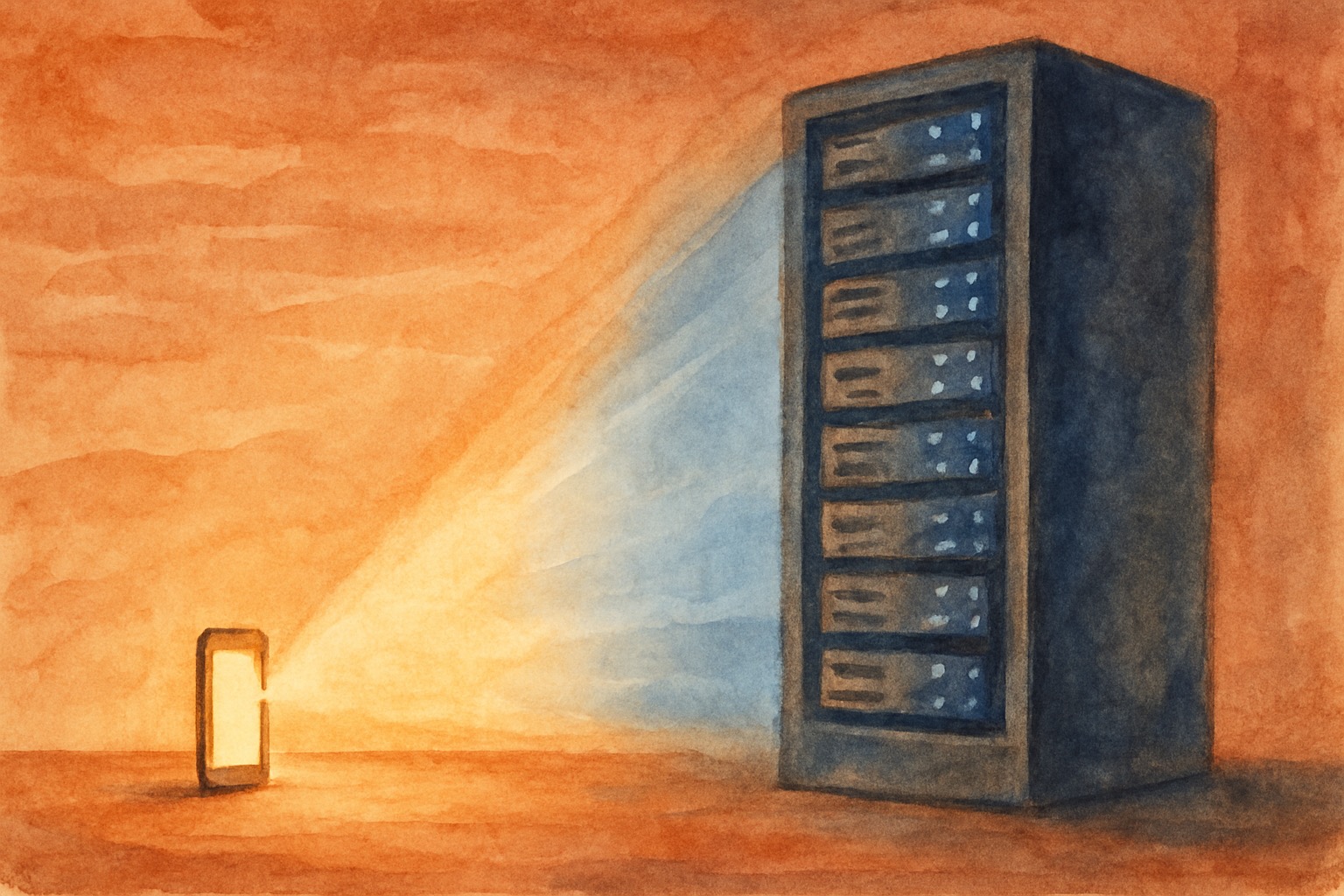

Why Your AI Bill Is 30x Too High

Small language models match GPT-3.5 at 2% of the size and 95% less cost. Benchmarks, code, and a migration story from $13K/month to $400.

Part 1: Claude's 7 Extension Points — The Mental Model

CLAUDE.md, Skills, Hooks, MCP Servers, Connectors, Claude Apps, Plugins — Claude's extension ecosystem is powerful but confusing. Here's the mental model that makes sense of all 7.

Part 2: CLAUDE.md, Hooks, and Skills — Three Layers

CLAUDE.md sets conventions. Hooks enforce them. Skills teach workflows. Understanding these three layers — and their reliability spectrum — is the key to a Claude Code setup that actually works.

Part 3: MCP Servers vs. Connectors vs. Apps

All Claude Apps are Connectors. All Connectors are MCP Servers. Understanding this hierarchy — and when to build vs. use managed integrations — saves weeks of unnecessary engineering.

Part 4: All 7 Extension Points in One Production Codebase

50+ skills, multiple MCP servers, scoped rules, safety hooks — here's how all 7 Claude extension points compose in a real NestJS monorepo with 17 projects. What works, what fights, and what we'd do differently.

The Signal Briefing

One email a week. How leading CS, revenue, and AI teams are turning conversations into decisions. Benchmarks, playbooks, and what's working in production.