Articles tagged “testing”

53 articles

AI Agent KPIs: What to Measure Before You Ship

Only 31% of teams have a measurement framework for their AI agents. Here's how to define task completion rate, escalation rate, cost per outcome, and CSAT delta before your first production interaction.

Past 50 tools, function-calling accuracy falls off a cliff

Past 50 tools, function-calling accuracy falls off a cliff. Measure the curve on your own agent and recover accuracy with per-turn toolset scoping.

GPT-5, Claude 4.5, Gemini Score the Same Calls. Their Kappa Is 0.52

Run the same calls through GPT-5, Claude 4.5, and Gemini and Cohen's kappa lands at 0.52. Here is how to measure judge agreement on your own corpus.

MCP tool description drift: the silent failure nobody alerts on

Edit an MCP tool description for clarity, lose 8% routing accuracy, and the eval suite stays green. How to detect, gate, and roll back the drift.

When to Use a Supervisor, When to Let Agents Swarm

Supervisor burns 20-40% more tokens per run. Swarm hits a quality cliff past 8-10 handoffs. Start supervisor, graduate to swarm when latency bites.

Stop Using SWE-Bench to Pick Your CX Model

SWE-Bench scores 85% or 23% depending on the harness, and neither measures customer experience. Why tau-bench, tau2-bench, and pass^k matter for CX agents.

Every Conversation Is an Experiment You Didn't Run

Your agent already ran the A/B test you're scoping. Here's how to read the results in your logs with propensity matching, synthetic control, and diff-in-diff.

Every Failed Call Is a Test Case You Haven't Written Yet

The gap between staging and production for AI agents is measured in surprise. Here's how to close the loop from live failure to regression gate.

How Much Testing Is Enough for Your AI Agent?

Code coverage doesn't apply to AI agents. Here's a framework for thinking about evaluation coverage: how many scenarios you need, what distribution to target, and how to know when you've tested enough.

Your LLM-as-judge may be highly biased

LLM-as-Judge has 12 documented biases. Here are 6 evaluation methods production teams actually use instead, with code examples and patterns.

Is monitoring your AI agent actually enough?

Research shows 83% of agent teams track capability metrics but only 30% evaluate real outcomes. Here's how to close the gap with multi-turn scenario testing.

Memory bugs don't crash. They just give wrong answers.

Memory bugs don't crash your agent. They just give subtly wrong answers using stale context. Here are 5 test patterns to catch them before customers do.

The 17x error trap in multi-agent systems

Multi-agent systems amplify errors 17x, not reduce them. We compare CrewAI, LangGraph, and Autogen failure modes with concrete fixes and a decision tree.

The no-code ceiling: when agent builders hit production

Visual agent builders get you to 80% fast. The last 20%, telephony, monitoring, testing, and memory, requires infrastructure they never intended to provide.

Online vs. Offline Evals: Close the Production Gap

89% of teams have observability but only 37% run online evals. Here's why that gap is where production failures hide, and how to close it with a practical online eval pipeline.

LLM-as-a-Judge: Build a Production Eval Pipeline

Build a production LLM-as-a-judge eval pipeline step by step. Covers judge selection, rubric design, CI integration, and sampling strategies that scale.

We open-sourced our AI agent testing engine

chanl-eval is an open-source engine for stress-testing AI agents with simulated conversations, adaptive personas, and per-criteria scorecards. MIT licensed.

Production Agent Evals: Catch Score Drift, Ship Confidently

Your evals pass in staging but miss production failures. Build three eval pipelines with the Chanl SDK: automated scorecards, scenario regression, and drift detection that catches quality degradation before customers do.

Agent Drift: Why Your AI Gets Worse the Longer It Runs

AI agents silently degrade over long conversations. Research quantifies three types of drift and shows why point-in-time evals miss them entirely.

12 Ways Your LLM Judge Is Lying to You

Research identifies 12 systematic biases in LLM-as-a-judge systems. Learn to detect and mitigate each one before they corrupt your eval pipeline.

Your Agent Completed the Task. It Also Forgot 87% of What It Knew.

Task completion hides a silent failure: agents forget 87% of stored knowledge under complexity. New research reveals why standard evals miss this entirely.

74% of Production Agents Still Rely on Human Evaluation

A survey of 306 practitioners reveals most production agents are far simpler than expected. The eval gap isn't a tooling problem. It's a trust problem.

NIST Red-Teamed 13 Frontier Models. All of Them Failed.

NIST ran 250K+ attacks against every frontier model. None survived. Here's what the results mean for teams shipping AI agents to production today.

Your Agent Is Getting Smarter. It's Not Getting More Reliable.

Reliability improves at half the rate of accuracy. Three 85%+ tools combine to just 74%. Here's the math, the research, and the testing protocols that close the gap.

Your AI Assistant Works in Demo. Then What?

Test your AI shopping assistant with AI personas that simulate real customer segments, score conversations with objective scorecards, and monitor production metrics that matter for ecommerce.

Your Agent Aced the Benchmark. Production Disagreed.

We scored 92% on GAIA. Production CSAT: 64%. Here's which AI agent benchmarks actually predict deployed performance, why most don't, and what to measure instead.

Zero-Shot or Zero Chance? How AI Agents Handle Calls They've Never Seen Before

When a customer calls with a request your AI agent has never encountered, what actually happens? We break down the mechanics of zero-shot handling, and how to test for it before it fails in production.

Your Agent Passed Every Dev Test. Here's Why It'll Fail in Production

A 4-layer testing framework for AI agents (unit, integration, performance, and chaos testing) so your agent survives real customers, not just controlled demos.

AI Agent Testing: How to Evaluate Agents Before They Talk to Customers

A practical guide to testing AI agents before production — scenario-based testing with AI personas, scorecard evaluation, regression suites, edge case generation, and CI/CD integration.

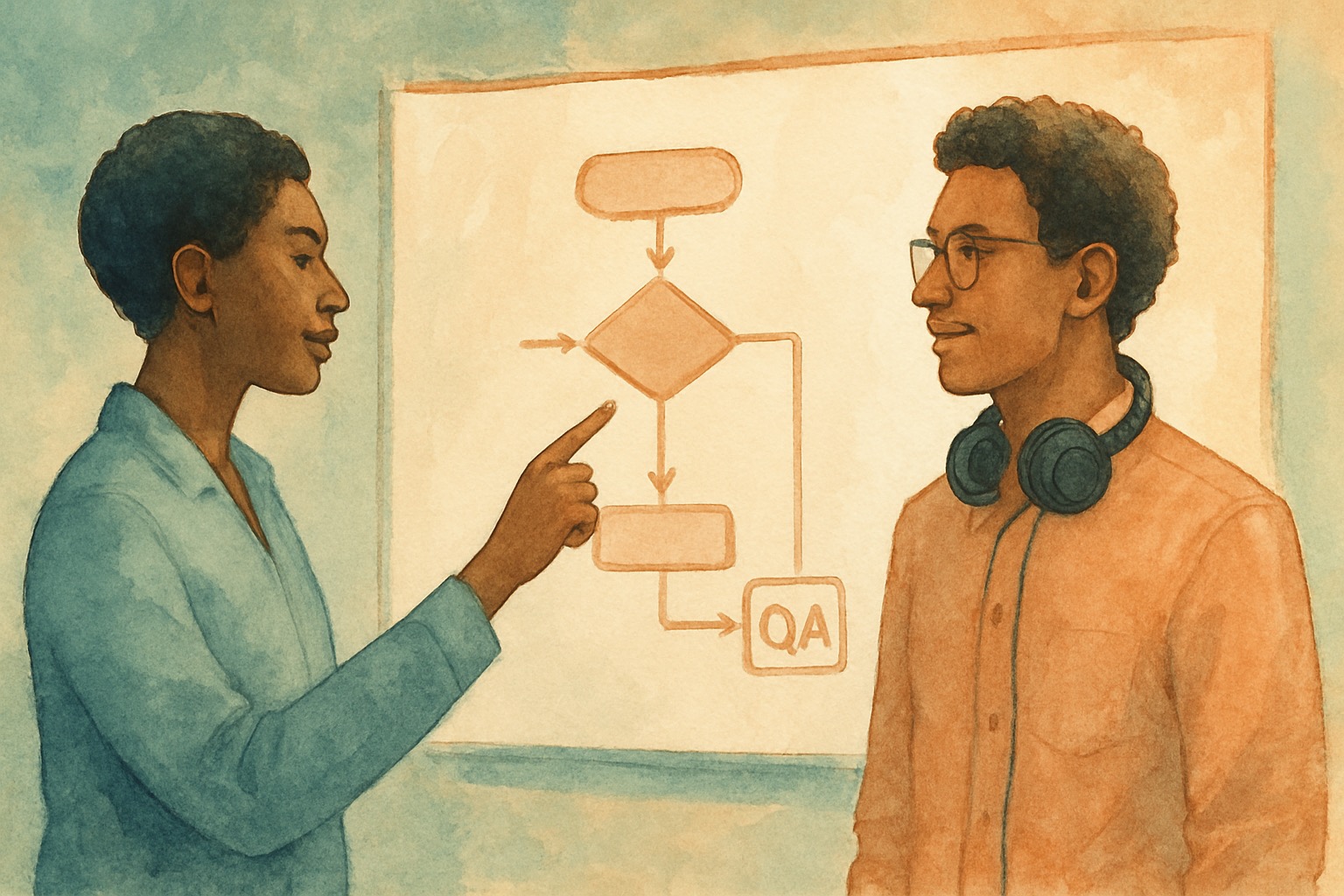

Who's Testing Your AI Agent Before It Talks to Customers?

Traditional QA validates deterministic code. AI agent QA must validate probabilistic conversations. Here's why that gap is breaking production deployments.

How to Evaluate AI Agents: Build an Eval Framework from Scratch

Build a working AI agent eval framework in TypeScript and Python. Covers LLM-as-judge, rubric scoring, regression testing, and CI integration.

Your Voice AI Platform Is Only Half the Stack

VAPI, Retell, and Bland handle voice orchestration. Memory, testing, prompt versioning, and tool integration? That's all on you. Here's what to build next.

Gartner Says 80% Autonomous by 2029. Here's What Nobody's Talking About.

Gartner predicts 80% autonomous customer service by 2029. But the gap between today's AI agents and that future requires testing, monitoring, and quality infrastructure most teams don't have.

The Knowledge Base Bottleneck: Why RAG Alone Isn't Enough for Production Agents

RAG works beautifully in demos. In production, stale data, chunking failures, and unscored retrieval quietly sink your AI agents. Here's what actually fixes it.

The MCP Marketplace Problem: Why Standardized Integrations Need Standardized Testing

5,800+ MCP servers, 43% with injection flaws. Standardized protocol doesn't mean standardized quality. Why every MCP integration needs automated testing.

Prompt Engineering Is Dead. Long Live Prompt Management.

Why production AI teams need version control, A/B testing, and rollback for prompts — not just clever writing. The craft has changed.

Real-Time Monitoring for AI Agents: What to Watch and When to Panic

What dashboards actually matter for production AI agents. Alert fatigue, anomaly detection, and the metrics that predict failures before customers notice.

Scenario Testing: The QA Strategy That Catches What Unit Tests Miss

Discover how synthetic test conversations catch edge cases that unit tests miss. Personas, adversarial scenarios, and regression testing for AI agents.

Scorecards vs. Vibes: How to Actually Measure AI Agent Quality

Most teams 'feel' their AI agent is good. Here's how to build structured scoring with rubrics, automated grading, and regression detection that holds up.

The Tool Explosion: Managing 50+ Agent Tools Without Losing Your Mind

As agents get more capable, tool sprawl becomes a real operational problem. Here's how to organize, test, and monitor function calling at scale before it breaks in production.

The Multilingual Voice AI Challenge: Breaking Language Barriers While Maintaining Quality

Explore the technical complexities of multilingual voice AI including accent adaptation, cultural context, and quality assurance across languages.

Voice AI Testing Strategies That Actually Work: A Complete Framework for Production Success

Discover the comprehensive testing framework used by top voice AI teams to achieve 95%+ accuracy rates and prevent costly production failures. Includes real case studies and actionable implementation guides.

Automated QA Grading: Are AI Models Better Call Scorers Than Humans?

Industry research shows that 75-80% of enterprises are implementing AI-powered QA grading systems. Discover whether AI models actually outperform human call scorers and how to implement effective automated grading.

Digital Twins for AI Agents: Simulate Before You Ship

Build digital twins that test your AI agent against thousands of synthetic customers. Architecture, TypeScript code, and the patterns that catch failures.

Failure Modes: What 'Accidents' in Voice AI Teach Us about Responsible Deployment

When voice AI systems fail, they don't just break. They reveal fundamental truths about how we build, deploy, and trust artificial intelligence. Discover what real-world failures teach us about responsible AI.

Fail Fast, Speak Fast: Why Iteration Speed Beats Initial Accuracy for AI Agents

The teams winning with AI agents are not the ones with the best v1. They are the ones who improve fastest after launch. Here's how to build a rapid iteration engine for conversational AI.

Performance Benchmarks for AI Agents: What Actually Matters Beyond Word Error Rate

Most enterprises obsess over Word Error Rate while missing the metrics that actually predict success. Here's what to measure instead.

Testing Bias: How to Measure and Reduce Socio-linguistic Disparities in AI

A practical guide to detecting and measuring bias in AI voice and chat agents. Covers specific metrics, testing approaches, scorecard design, and what teams actually do when they find disparities.

The Voice AI Quality Crisis: Why Most Deployments Fail in Production

Most voice AI deployments fail in production despite passing lab tests. Real data on why the gap exists, what it costs, and how to close it.

Why 75% of AI chatbots fail complex issues — and what the other 25% do differently

Industry research reveals 75% of customers believe chatbots struggle with complex issues. Learn why this happens and discover proven testing strategies to dramatically improve your AI agent performance.

The Human Touch: Why 90% of Customers Still Choose People Over AI Agents

Despite AI advances, 90% of customers prefer human agents for service. Discover what customers really want from AI interactions and how to bridge the trust gap through rigorous testing.

Voice AI Hallucinations: The Hidden Cost of Unvalidated Agents

Discover how voice AI hallucinations can cost businesses thousands daily and learn proven strategies to detect and prevent them before they reach customers.

The 12 Critical Edge Cases That Break Voice AI Agents

Uncover the most common edge cases that cause voice AI failures and learn how to test for them systematically to prevent customer frustration.

The Signal Briefing

One email a week. How leading CS, revenue, and AI teams are turning conversations into decisions. Benchmarks, playbooks, and what's working in production.